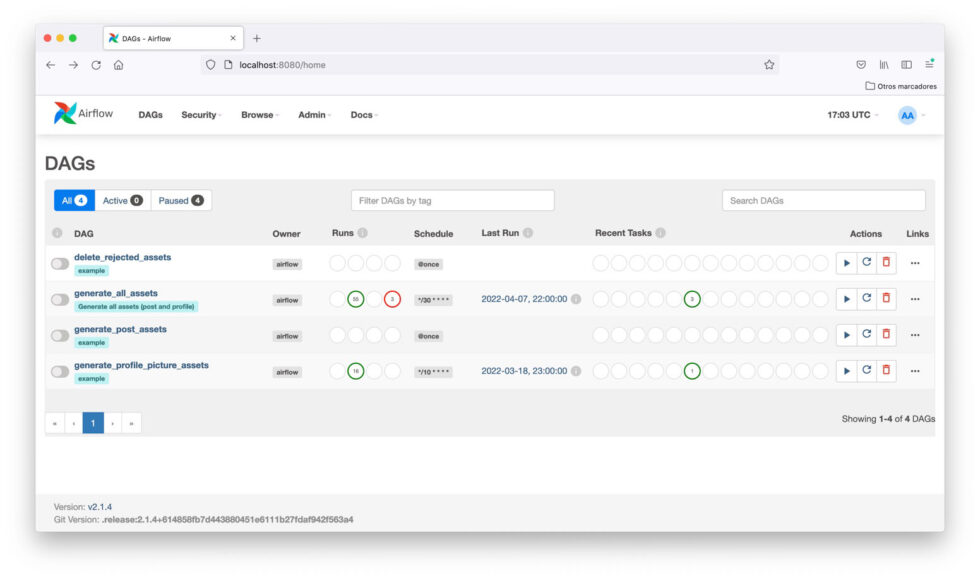

Once the processing is done, you can even store the end result back in a MinIO bucket for other tools to consume. By storing petabytes of data in MinIO buckets, you can create data pipelines in Airflow to process vast amounts of data which is essential for DAGs to run as quickly as possible. MinIO is the perfect companion for Airflow because of its industry-leading performance and scalability, which puts every data-intensive workload within reach. It also has a rich set of features, including support for scheduling, alerting, testing, and version control. A complex DAG can become brittle and difficult to troubleshoot, particularly if there are dozens of tasks that must be managed by the architect.Īirflow provides a web interface to manage and monitor the workflows, as well as an API to create, update, and delete workflows. A DAG does not care about the tasks themselves, just the order, number of retries etc. Each node in the DAG represents a task, and the edges between the nodes represent dependencies between the tasks. Machine learning training models and retrainingĪirflow is written in Python and uses a directed acyclic graph (DAG) to represent the workflow.It excels in those use cases that benefit from data-pipelines-as-code, such as: This includes data lakes, data warehouses, databases, APIs and, of course, object stores. It is also used in other industries, such as finance, healthcare and e-commerce, to automate business processes.Īirflow is very flexible with regard to what it can connect to. It was originally developed by the engineering team at Airbnb but was given to the Apache Software Foundation where it is licensed under Apache 2.0.Īirflow is commonly used in data engineering and data science pipelines to automate the execution of tasks, such as data transformation, loading and analysis.

Docs Blog Resources Partner Pricing DownloadĪpache Airflow is an open-source platform to programmatically author, schedule, and monitor workflows. VMware Discover how MinIO integrates with VMware across the portfolio from the Persistent Data platform to TKGI and how we support their Kubernetes ambitions. HDFS Migration Modernize and simplify your big data storage infrastructure with high-performance, Kubernetes-native object storage from MinIO. Splunk Find out how MinIO is delivering performance at scale for Splunk SmartStores Veeam Learn how MinIO and Veeam have partnered to drive performance and scalability for a variety of backup use cases. No need to move the data, just query using SnowSQL. Snowflake Query and analyze multiple data sources, including streaming data, residing on MinIO with the Snowflake Data Cloud. Commvault Learn how Commvault and MinIO are partnered to deliver performance at scale for mission critical backup and restore workloads. Integrations Browse our vast portfolio of integrations SQL Server Discover how to pair SQL Server 2022 with MinIO to run queries on your data on any cloud - without having to move it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed